Overview

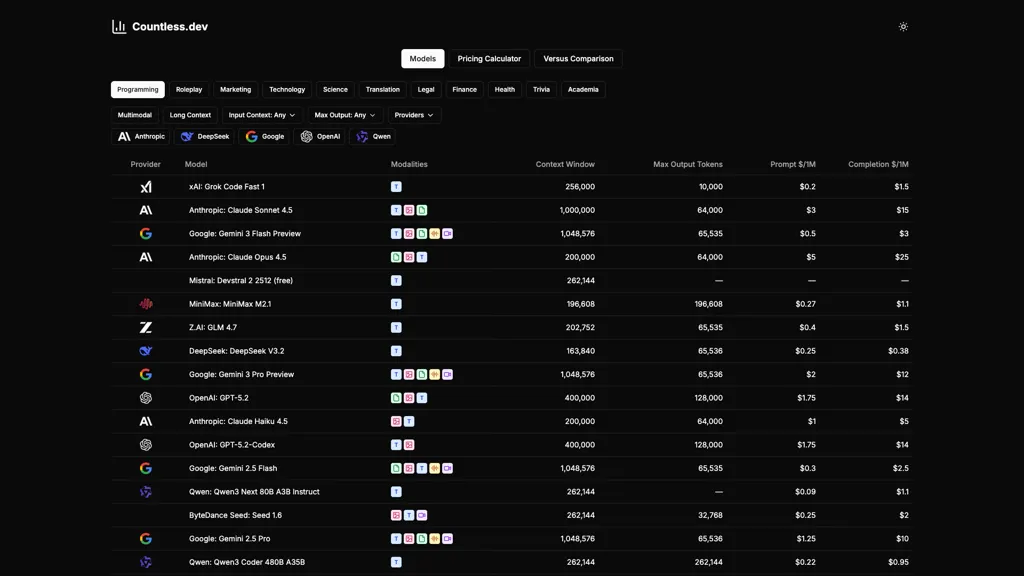

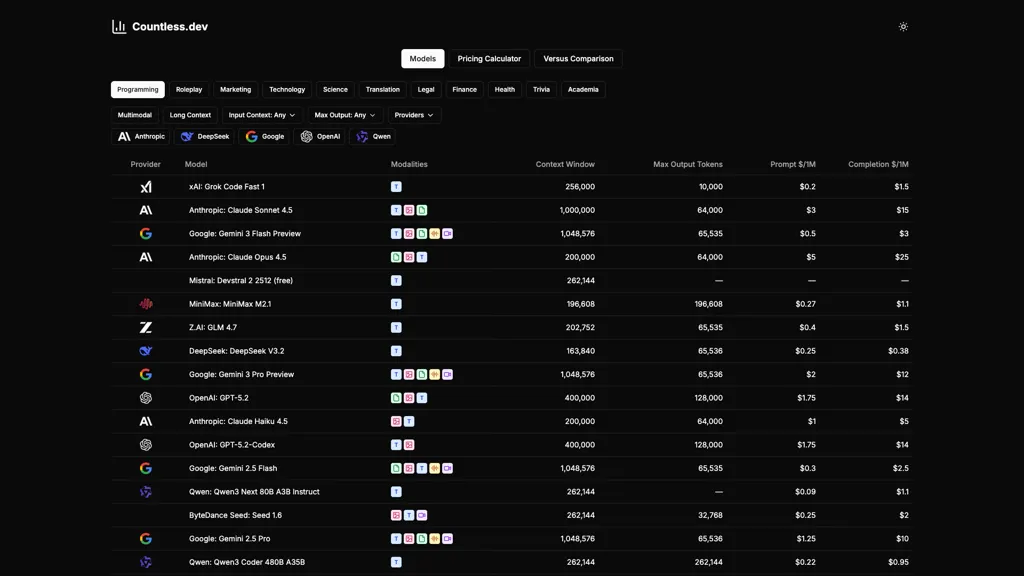

Compare and price AI/LLM models for cost-effective deployment decisions.

llmarena.ai provides LLM comparison and AI model pricing tools for comparing models across major providers.

Includes a pricing calculator and side-by-side model comparison of specs like context window, max output tokens, and modality support.

Offers filters and role-based categories (programming, marketing, translation, legal, finance, health, academia) for targeted model selection.

Displays provider and model names, token pricing metrics, routing options, and technical details to support deployment and cost planning.

Supports comparison of multimodal and long-context LLMs and sorting by token pricing, context window, and output capacity.

Use Cases

- Compare and choose the best model for your product by using llmarena.ai's side-by-side LLM comparisons, role-based filters, and modality/context-window views to evaluate output capacity, routing options and trade-offs across providers.

- Forecast and minimize deployment costs with the built-in LLM pricing calculator and token-pricing comparison to model per-request expenses, compare long-context vs standard models, and pick the most cost-effective option for production.

- Rapidly prototype and research capabilities by filtering for long-context and multimodal models, running side-by-side spec and routing comparisons, and selecting models tailored for developers, ML engineers or product managers to integrate into experiments or features.

Who Is It For

- Software developers

- Machine learning engineers

- Product managers

- Data researchers

- Technical analysts